Emotion Picker

Live: https://emotion-picker.netlify.app

We must live in amazing times if AI has temporarily pulled me out of my stupor to create something which I think is really useful. The preceding problem is very practical: how am I going to migrate out of Movable Type so that my content becomes available to AI? MT has been a trusty server-side static site generator, but I need to be able to manipulate my own "brain" locally. h0p3, as always, is years ahead in this and I'm ashamed to say I've been disconnected — partially to immerse myself, because what I'm trying to resolve will finally make me able to have a conversation with him.

The problem of exporting my site into an independent Astro-based multi-site repo had been done, and I was checking my own content. There was my private dream blog. I've always wanted to properly categorize dreams, and even though MT allows for it, it's a drag. Let's use a prototypical dream as an example: you forgot you must take an exam.

Let's start with a basic taxonomy of a dream — what is it? We are not dreaming and reporting from inside it; we only report on dreams when we are awake, and the waking state contains the experience of the dream state. So the data structure needs to represent the dream as a child where the dates and the times can change, because the dream needs to be a distinct event from the time the dream was experienced. You need to register the date and time when you experienced it, and any special conditions of your state at the time of the dream. Within the dream the present can change and refer to a period of childhood, for example, and the dream in itself becomes a self-contained reality.

I was lamenting the fact that I have been so haphazard capturing any information, but glad to see that I used a convention where I set the creation date to a time in the past. Of course, I don't remember the original conditions of those dreams, but I feel like adding data to my scarce personal data set is more important than exhaustiveness at this stage.

It was a delight re-reading my dreams but I didn't feel confident assigning a vocabulary to my own dreams. How would I distinguish Rage from Anger if I were to classify my own dreams? Ought emotional labels fit discrete steps in categories? This took me down a tremendous rabbit hole that pulled from different parts of personal experience.

Conceptually, I'm well versed with color systems, and doing this (mapping emotions to three-dimensional vectors) also looks like that. Perhaps in the future we can build a gamut and profile of emotions, so that we understand each other better — but also warn that just because we claim "that emotion is not in my gamut", that might also mean "I have not grown my gamut to account for that emotion".

I could only code this because I've already built color.metod.ac, and because AI exists.

How it works

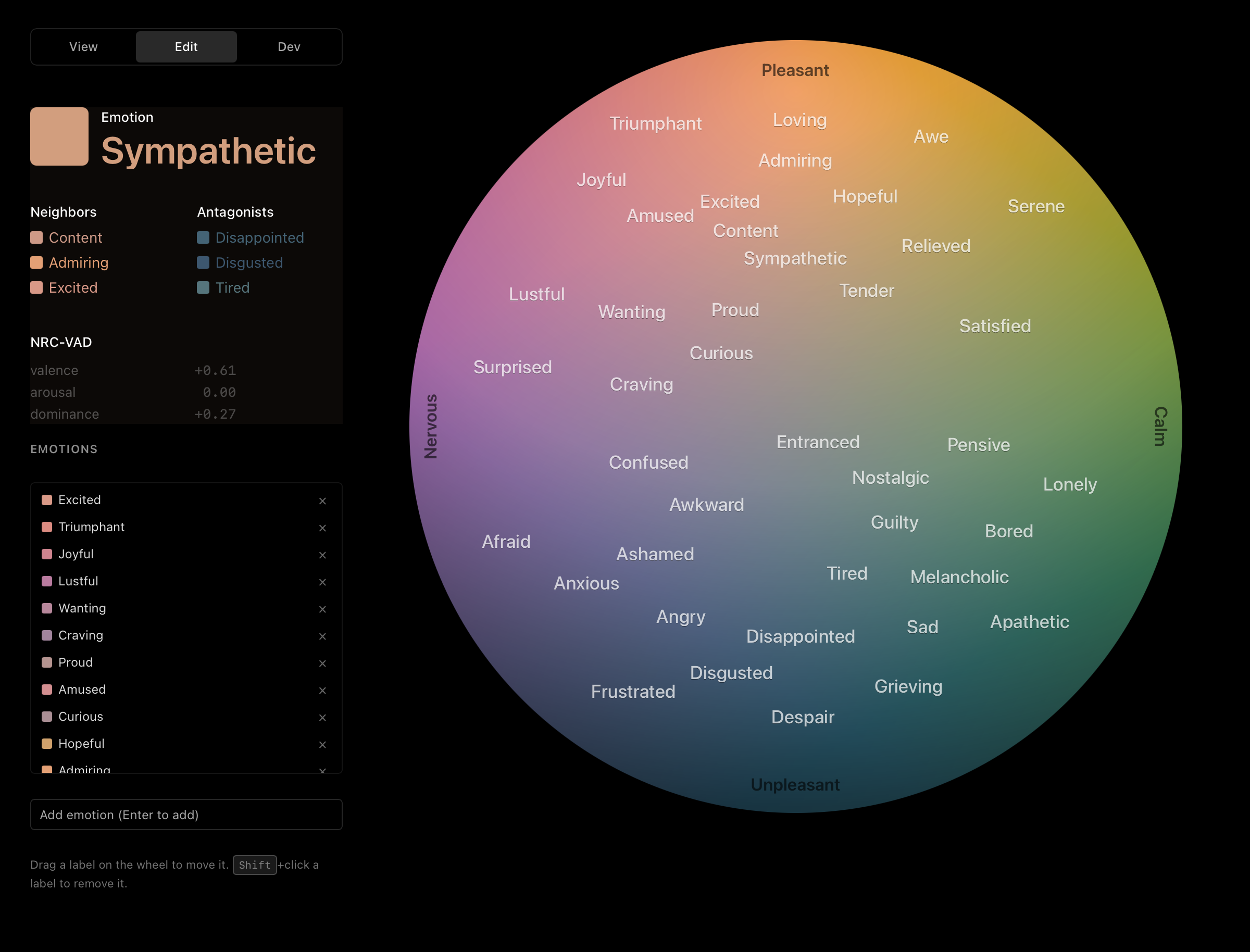

The wheel is a unit disk in (pleasure, arousal) space:

- vertical axis = pleasure, in [-1, +1] — up is pleasant, down is unpleasant

- horizontal axis = arousal, in [-1, +1] — left is activated, right is calm

- a third scalar, dominance, travels alongside as a label-only readout

The colored fill is computed pixel-by-pixel in OKLCH and converted to sRGB. Hue follows the angle around the disk; chroma scales with radius from the center. A radial-gradient mask fades the unpleasant (lower) pole so the page background bleeds through — saturation is preserved because pixels are removed, not mixed with white or black.

Emotion labels live in SVG over the canvas, placed at each emotion's normalized (p, r) coordinate. They're hidden by default and reveal proportionally to cursor distance, with a soft floor opacity so the wheel never becomes a wall of text. The sidebar shows the nearest emotion to the handle, three closest neighbors, three closest antagonists (nearest to the diametric mirror, -p, -r), and the dataset's raw VAD coordinates. A small dark mirror handle tracks the antagonist position as you drag.

Three datasets ship by default:

- Mark's map of emotions — my curated 41-emotion layout. Editable, persisted to

localStorage, and the default landing dataset. - NRC VAD (as-is) — pure NRC VAD Lexicon source values (Mohammad 2018). Read-only.

- Cowen & Keltner (2017) — the 27 emotion categories from the C&K paper, with NRC-VAD lookups for coordinates. Read-only.

Three modes:

- view — pick: drag on the wheel, read out the nearest emotion.

- edit — drag labels to relocate them, shift-click to remove, type a name to add a new one. Editing a read-only dataset auto-forks it as a copy.

- dev — everything in edit, plus dataset CRUD, the unpleasant-fade slider, four globe-mask geometry sliders, a coords copy field, and an "export dataset as JSON" dump.

State is persisted as a single userState object under the emotion-picker:v1 key in localStorage. The two read-only built-ins are re-seeded from source on every load (so source-level edits flow through), while the writable Mark's map is seeded once and then mutates in place.

Stack: vanilla HTML/CSS/JS, d3 for the drag behaviors and SVG plumbing, no build step. Hosted on Netlify.

Credit

I built this with Claude (Claude Code, Opus 4.7) acting as an agent of my will. I described what I wanted; Claude refactored the data model, designed the sidebar, wrote the mode-aware drag handling, looked up the VAD coordinates for Cowen & Keltner's 27 emotions, set up the Netlify deploy, and shaped this README from my rough draft. In my opinion Claude was spectacular in capacity and reasoning. I'm deeply humbled.